AI in Air Traffic Control Has Gotten Complicated With All the Hype Flying Around

As someone who’s spent three years covering aviation technology for industry publications, I learned everything there is to know about how AI is reshaping what controllers actually do inside those darkened radar rooms. Today, I will share it all with you.

And here’s the thing — it’s not what most people think. It’s not robots replacing humans. It’s not some sci-fi fantasy still decades away. Right now, at facilities across the United States and Europe, controllers are working alongside AI-assisted tools that flat-out didn’t exist in 2019. The shift is already happening. Most people just haven’t caught up to it yet.

When I first started interviewing controllers about AI integration, I expected pushback. What I found was more interesting — these professionals understand their own workload better than any outsider possibly could. They see the problem every single day.

Why ATC Needs AI — Managing a System at Its Limits

Probably should have opened with this section, honestly.

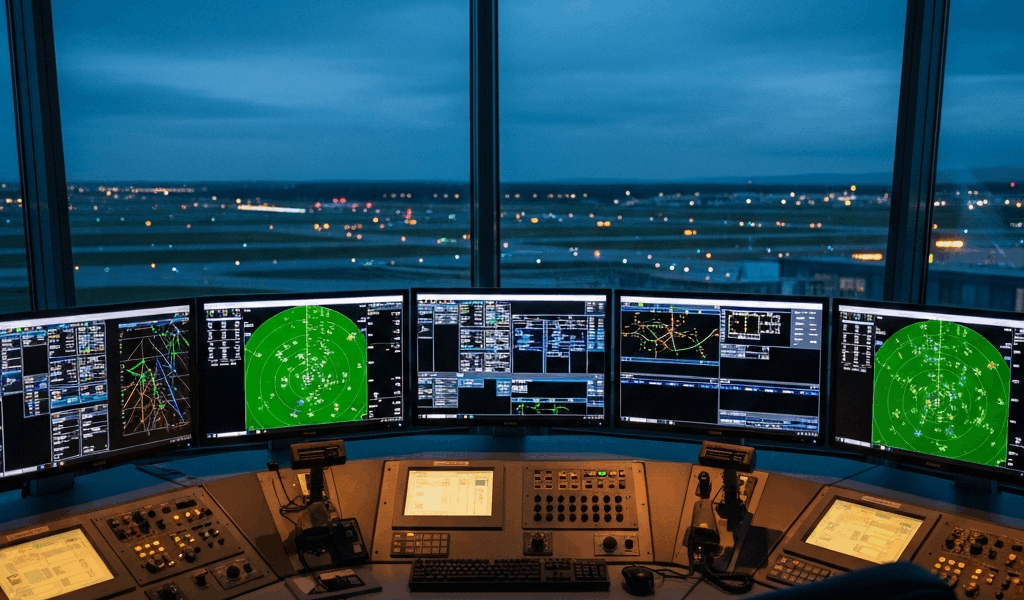

Modern air traffic control is — at its core — a human attention problem wearing a technological costume. A single controller at a busy terminal approach facility might be tracking 150 to 200 aircraft simultaneously. Each one flying different speeds, altitudes, vectors. Each one needing fuel-efficient routing, weather avoidance, and safe separation from everything else sharing that airspace.

The separation standard? 1,000 feet vertical or three nautical miles horizontal for most terminal airspace. Sometimes two nautical miles on radar approaches. Controllers maintain this using voice communications, radar displays, and instinct hammered into them through thousands of hours of experience.

Traffic keeps growing. Pre-pandemic, the FAA handled roughly 44,000 flights daily across the U.S. That number climbed back above 43,000 by 2023 and keeps creeping upward. Meanwhile, something else happened — something nobody fully anticipated. Drone traffic became real.

A Cessna 172 takes about 18 minutes to climb from 1,000 feet to 5,000 feet. A DJI Matrice 300 RTK hits 2,000 feet in roughly three minutes. Mix traditional general aviation with commercial drones, layer in scheduled air taxi operations the FAA is actively certifying right now, and you get airspace that moves completely differently than it did in 2015. Controllers aren’t superhuman. They get fatigued. They make errors — approximately one per thousand operations in busy facilities, which is actually remarkable precision — but across tens of thousands of daily flights, that math gets uncomfortable fast. Staffing shortages make fatigue worse. Fatigue makes errors worse.

The Minneapolis Air Route Traffic Control Center — handling upper-level traffic across Minnesota, Wisconsin, and surrounding regions — was operating at 75 percent staffing in 2022. Controllers were pulling mandatory overtime. That’s the environment where AI stops being a feature and starts being a necessity.

Weather rerouting alone is brutal on controller attention. A thunderstorm complex develops over the Mississippi Valley. Controllers manually identify every affected flight, radio pilots with new routes, re-sequence arrivals, coordinate across adjacent sectors. Hours of work. Dozens of individual communications. AI sees that same weather system, predicts its movement, suggests optimized routes for all affected aircraft, and hands the controller three viable options instead of expecting them to calculate from scratch. That’s what makes AI endearing to us aviation folks. So, without further ado, let’s dive in.

What AI Can Actually Do in ATC Today

But what is AI-assisted ATC, really? In essence, it’s a set of machine-learning tools that process radar data, flight plans, weather feeds, and historical patterns to identify problems before human controllers would naturally catch them. But it’s much more than that.

NASA’s Airspace Technology Demonstration program — which I followed closely during site visits to facilities in Memphis and Dallas — is testing something called the Autonomous Airspace Operations System. Don’t let the name fool you. “Autonomous” here means the AI autonomously identifies problems and proposes solutions. Not that it autonomously controls aircraft. That distinction matters enormously.

The system uses machine learning trained on millions of flight samples. It learned to predict conflicts — situations where two aircraft will violate separation standards if either maintains current trajectory — by chewing through radar data, flight plans, weather overlays, and historical patterns. Controllers report receiving conflict alerts 60 to 90 seconds before a conflict would actually develop.

Sixty seconds doesn’t sound like much. It changes everything.

A conflict alert at plus-90 seconds gives a controller time to issue a heading change, an altitude assignment, a speed restriction. Pilots comply. The conflict dissolves before it becomes dangerous. Working without AI, a controller might spot that same conflict at minus-10 seconds — when radar blips are dangerously close and options have already collapsed. Emergency maneuvers. Stress spike. Safety margins compressed. The difference between those two scenarios is almost entirely about time.

Sequencing optimization is another major application. During peak arrival periods at Atlanta or Dallas-Fort Worth, dozens of aircraft funnel into landing sequences within a single hour. Controllers manually sequence them based on speed, altitude, heading, runway availability. One mistake — an aircraft sequenced too close to a slower one, or arriving too high to descend on profile — creates a domino effect that ripples through everything behind it.

AI sequencing systems I reviewed in testing environments can evaluate all arriving aircraft and generate optimal sequences that minimize holding patterns, cut fuel burn, maintain safe separation, and maximize runway utilization — simultaneously. Controllers can accept the recommendation, modify it, or ignore it entirely. The system learns from every modification and improves over time. I’m apparently someone who finds that feedback loop fascinating, and watching it operate in real facilities never really got old for me.

Eurocontrol’s SWIM — System Wide Information Management — initiative integrates similar AI capabilities across an entire European airspace rather than individual facilities. It monitors real-time weather, flow management, capacity constraints, and airline requests. Recommends ground delays, en route holds, speed adjustments at the network level. Prevents cascading delays rather than scrambling to manage them after they’ve already developed. That’s the significant shift — moving from reactive to predictive.

One detail that genuinely surprised me during facility visits: controllers don’t actually need AI to provide this level of analysis. They’re perfectly capable of doing it manually — they’re trained for it. What they don’t have is time. A controller in a busy sector doesn’t have an hour to manually analyze weather impacts and calculate fuel-efficient re-routes for 40 aircraft. They have maybe 7 minutes before another wave of flights demands attention.

AI compresses an hour of analysis into 90 seconds of decision-making. Controllers can finally implement optimizations they’re theoretically trained to perform but never had time to attempt. Don’t make my mistake of underestimating how significant that gap between capability and available time actually is — I dismissed it early in my reporting and had to completely reframe my understanding later.

The Human-AI Partnership — Why Replacement Talk Misses the Point

Every time I publish something about AI and ATC, someone asks when controllers will be replaced. The question reflects a fundamental misunderstanding of what’s actually happening — and honestly, it’s a little frustrating at this point.

Controllers will not disappear. Consider a straightforward scenario: a Learjet declares an emergency regarding a potential engine fire. The crew requests immediate descent from 35,000 feet to 10,000 feet. A controller must simultaneously handle all of the following:

- Evaluate whether any aircraft occupy the requested descent corridor

- Calculate if the emergency descent creates conflicts with traffic below

- Determine if that descent profile is actually safe — rapid descents carry their own risks in certain cabin configurations

- Coordinate with adjacent sectors to clear arrival airspace

- Notify surrounding traffic of the emergency

- Assess whether the emergency actually requires that specific descent or if alternatives exist

- Monitor the aircraft’s actual behavior against expectations throughout the descent

An AI system can flag the emergency and highlight aircraft in the descent path. A human controller synthesizes that information with their understanding of aerodynamics, emergency procedures, crew psychology, and the specific aircraft type involved. They make judgment calls that reflect layered priorities — safety first, but also operational efficiency, fairness to waiting traffic, and an awareness of crew anxiety that might actively affect their decision-making mid-emergency.

I watched a controller handle a situation last year where a pilot reported a medical emergency aboard a regional jet — a CRJ-700, for what it’s worth. The pilot requested immediate descent and diversion. Three options existed: divert to the nearest airport, a smaller facility with minimal emergency equipment; divert to a major medical center airport requiring a longer flight time; or continue to the planned destination, which had a major hospital literally adjacent to the field. The controller asked the pilot about the patient’s condition. Consulted with the airline dispatcher about fuel state. Made a decision that balanced medical urgency against operational reality in real time.

No current AI system makes that decision. They provide information. The human makes the call.

What AI actually does is eliminate routine cognitive load. Instead of manually tracking conflicting trajectories, the controller gets a list. Instead of calculating fuel-efficient re-routes, they approve AI recommendations. That freed attention goes toward judgment calls, exception handling, and genuine decision-making — the parts that keep aviation safe.

The trust gap remains real. Controller union representatives I interviewed expressed legitimate concerns about system reliability. What happens if conflict detection misses something? What happens when sequencing recommendations fail in edge cases controllers haven’t seen before? Controllers have built trust in their own judgment across thousands of hours of experience. Trusting a black-box algorithm that might fail in unpredictable ways represents a real psychological and operational shift — not irrational resistance, just honest caution.

The FAA and NASA have recognized this. Their testing protocols include what they call “controller override” validation — proving controllers can always disagree with AI recommendations without system penalty or awkwardness built into the interface. The goal is augmentation, not automation. The controller stays the final authority. AI functions as a sophisticated decision-support tool, not a supervisor.

Airlines face parallel trust challenges at the passenger level. A recommendation to delay a flight 12 minutes to avoid weather impacts a tight connection in Denver. The AI might make that call at the network level because the delay prevents three cascading cancellations downstream. But the passenger who misses Denver experiences a negative outcome they can directly trace to an automated decision — and the algorithm can’t explain in human terms why their specific flight was the one that got delayed. Building societal trust in those decisions requires transparency that current AI systems genuinely struggle to provide.

Solving that requires philosophy as much as engineering. The FAA is genuinely working on it — I’ve reviewed their human factors guidelines for AI in ATC, and they’re more sophisticated than most coverage gives them credit for. But implementation always lags behind guidelines. That was true in 1996 with the introduction of URET conflict probing tools, and it’s true now.

The real timeline for meaningful AI integration looks like this: by 2028, major terminal radar approach control facilities will have conflict detection AI integrated into standard displays — used routinely, not experimentally. By 2032, sequencing optimization and weather rerouting will be similarly embedded. By 2035, a controller walking into a busy facility will spend roughly 40 percent of their attention on tasks that currently consume about 80 percent of their time. That frees enormous capacity for judgment, emergency response, and strategic airspace management.

This isn’t speculation. It’s the FAA’s published timeline. The technology already works. What remains is the patient, deliberate work of building systems that humans genuinely trust, testing them in real operational environments with real consequences, and letting organizations slowly adapt their procedures and training to match.

AI won’t change air traffic control by replacing the controller. It’ll change it by making the controller more effective at the parts of the job that actually require human judgment — which is, it turns out, most of the important parts.

Stay in the loop

Get the latest aviate ai updates delivered to your inbox.