Why Traditional Turbulence Warnings Still Fail Pilots

Turbulence prediction has gotten complicated with all the conflicting information flying around — and I mean that literally. I’ve been watching the same forecast loops for fifteen years. A PIREP comes in — pilot-initiated report — flagging moderate chop at flight level 350 near Denver. By the time dispatch pushes it out, the report is forty-five minutes stale. The aircraft that filed it is already two hundred miles downstream. That’s the core failure of historical turbulence detection: we’re permanently looking backward.

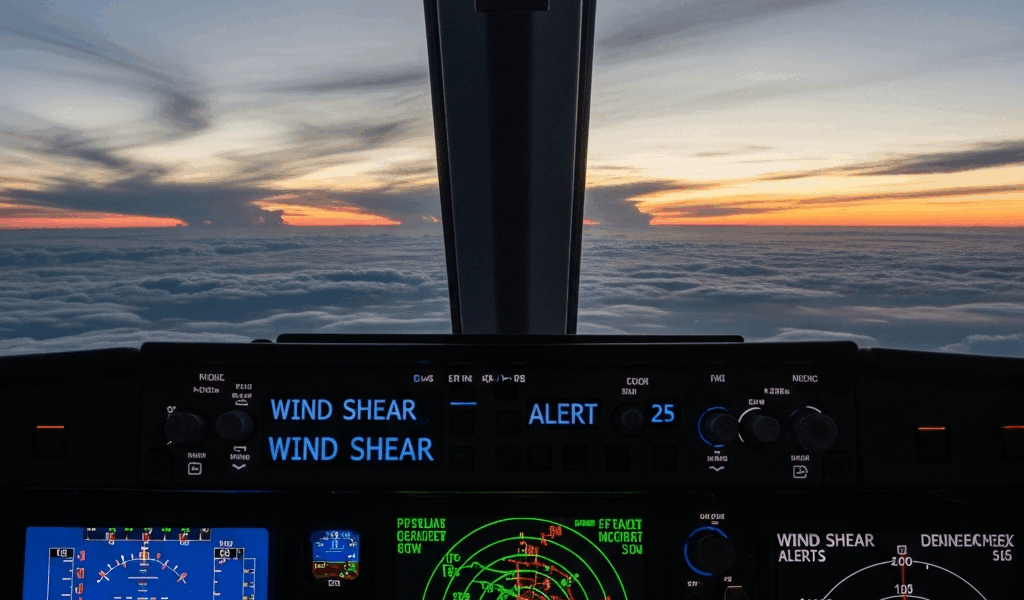

Radar doesn’t rescue you here either. It sees precipitation, hail, heavy cloud tops. Clear-air turbulence — the kind that feels like someone grabbed your fuselage and shook it hard — shows up as absolutely nothing on radar. Invisible to Doppler. Just air masses moving at mismatched speeds, generating shear layers that send zero reflection back to the antenna.

The NWS forecast models inside flight planning systems run at roughly 13-kilometer spatial resolution. At cruise altitude, that’s essentially useless. An actual turbulent layer might be two kilometers wide. The forecast reads smooth. You get thrown around anyway. The model was never granular enough to catch something that small.

This is where AI changes the game — not as magic, not as perfect clarity, but as a genuinely different approach to the same data sources that failed us before. It looks at what they’re telling us right now, across multiple timescales simultaneously, in ways human analysts and linear statistical models simply cannot replicate. Today, I’ll share everything I’ve learned about how that actually works.

What Data Sources AI Models Actually Pull From

But what is modern turbulence prediction, really? In essence, it’s a data fusion problem. But it’s much more than that. Understanding how AI handles it means understanding the messy, incomplete data diet these systems actually consume. No single source wins. All of them fall short on their own.

Satellite imagery from GOES-16

The NOAA GOES-16 satellite sits in geostationary orbit, scanning the continental US every five to ten minutes. It captures infrared signatures of clouds, surface temperature, moisture profiles. A machine learning model ingests this imagery — actual pixels, not human-interpreted summaries — and learns to spot atmospheric instability: wind shear signatures at cloud top, rapid cooling patterns, convective organization that often precedes moderate to severe turbulence.

Alone, it’s sluggish as a prediction tool. A satellite image is a snapshot. It shows structure but no vertical velocity. Wind speed in the frame doesn’t directly tell you what an aircraft will feel passing through.

Rawinsonde and pilot balloon data

Twice daily, the National Weather Service launches weather balloons from 92 fixed locations across the US. These rawinsondes measure temperature, humidity, wind speed, and wind direction at discrete altitude intervals — roughly every 100 meters in the lower atmosphere, sparser higher up. Accurate instruments. Severely limited schedule: two releases per day, fixed ground locations, 90 minutes of data collection per balloon.

They do directly measure wind shear, lapse rates, and moisture gradients. These are the physical parameters that actually create turbulence. An AI model seeing rawinsonde data knows what the real atmosphere looked like three hours ago at specific geographic points. That’s genuinely useful — even if it feels ancient by the time it arrives.

ACARS in-flight reports

Aircraft in cruise continuously broadcast ACARS data — Aircraft Communications Addressing and Reporting System — transmitting altitude, temperature, wind direction. Thousands of reports per hour cross North America alone. They’re biased samples, sure. More traffic on certain corridors, almost nothing over remote stretches. But they’re real time. They show where aircraft actually are and what they’re actually experiencing — not what a model guessed they’d experience.

Modern AI systems ingest ACARS streams and extract atmospheric parameters from the gap between what sensors report and what they should report if conditions matched the forecast. That residual signal? Often turbulence. Don’t overlook it.

NOAA ECMWF numerical weather models

The European Centre for Medium-Range Weather Forecasts model runs twice daily at 9-kilometer horizontal resolution at altitude. It’s the foundation layer: wind fields, temperature profiles, vertical velocity estimates out to ten days. Not precise enough to catch turbulence directly — the grid cells are bigger than the turbulence itself — but it provides large-scale atmospheric context. Where’s the jet stream sitting? How strong is the wind shear between 30,000 and 35,000 feet? Without that backdrop, everything else is noise.

EDR measurements — Eddy Dissipation Rate

Eddy dissipation rate is a physics-based metric that directly quantifies turbulent energy. Modern commercial aircraft measure it onboard. The numbers flow back to operators via ACARS or specialized turbulence-reporting pipelines. An EDR value of 0.3 is light. At 0.4 it starts feeling genuinely uncomfortable. Above 0.6, passengers definitely notice — coffee cups move on their own. EDR is the closest thing we have to a ground-truth turbulence measurement from the real atmosphere in real time.

Here’s the crucial part — and probably should have opened with this section, honestly. None of these sources alone predict turbulence reliably. A satellite sees structure but no vertical motion. A numerical model has grid cells bigger than the turbulence itself. ACARS data is observations, not predictions. Data fusion — feeding all of them into a single machine learning architecture simultaneously — is where the actual capability lives. The model learns relationships between satellite texture, rawinsonde wind shear measurements, real aircraft reports, and what turbulence typically follows each combination. That’s what makes this approach so different from what came before.

The Machine Learning Models Doing the Heavy Lifting

Turbulence prediction in operational aviation currently runs on three main architectural approaches — often simultaneously, often blended.

Gradient boosted decision trees

XGBoost and LightGBM dominate tabular atmospheric data processing. Feed them a row of inputs: satellite brightness temperature at 12 wavelengths, wind shear derived from rawinsonde vertical profiles, jet stream proximity, ACARS temperature residuals, time of day, geographic coordinates. The trees learn non-linear relationships. “When shear exceeds 30 knots between 25,000 and 35,000 feet and satellite shows this specific texture pattern and the jet is at this latitude, turbulence follows within two hours.” The model doesn’t explain why — it’s a black box — but it learns reliably from thousands of historical EDR examples.

Gradient boosting handles weather data well because atmospheric relationships are non-linear and threshold-dependent. A little more wind shear doesn’t cause proportionally more turbulence. There’s frequently a cliff edge where conditions suddenly destabilize — and tree-based models catch those edges better than linear approaches.

LSTM networks for temporal sequences

Long Short-Term Memory networks are recurrent neural networks built specifically to learn patterns across time series. A turbulence-focused LSTM ingests sequences: atmospheric profiles from the past six hours, satellite imagery trends, ACARS wind reports evolving over time. The network learns to project forward — “this sequence of atmospheric changes typically produces turbulence in the next two hours, traveling in this direction at roughly this altitude.”

LSTMs handle the temporal evolution component that static feature models miss entirely. Turbulence doesn’t materialize from nowhere. Shear layers develop gradually. Convective systems organize over time. An LSTM trained on EDR reports and satellite sequences learns the signature evolution that precedes a rough ride — not just the snapshot conditions at a single moment.

Ensemble models combining both

The NCAR GTG system — Graphical Turbulence Guidance, the most cited research benchmark in operational aviation — uses an ensemble that blends tree-based outputs with neural network outputs, weighted by their historical skill across different atmospheric regimes. Clear-air turbulence over the Rockies? One weighting combination performs best. Convective turbulence over the Gulf? A different one takes over. The system doesn’t output a single number. It generates probability grids: 30% chance of light turbulence, 8% moderate, 2% severe — at each grid point and altitude, for each six-hour forecast window.

That’s what makes ensemble prediction so endearing to forecasters and flight dispatchers. The models aren’t competing. They’re running in parallel, each learning different aspects of the same chaotic problem. An ensemble that ignores any one of them leaves real information on the table.

How Far Out Can AI Actually See Turbulence

Skill scores from NCAR research — measured against observed EDR data and actual PIREPs — show gradient boosted models achieve useful discrimination skill out to roughly four to six hours for moderate to severe turbulence. Light turbulence prediction extends further but with lower confidence intervals. Beyond eight hours, atmospheric evolution has accumulated enough error that model accuracy approaches climatological skill — essentially just predicting what’s historically typical for that season, region, and altitude.

Clear-air turbulence is significantly harder than convective turbulence. Thunderstorms show up on radar and satellite. They’re large, energetic, visible structures. Wind shear layers generating CAT can be 50 kilometers wide and only 500 meters thick. Harder to detect. Harder to predict. Don’t make the mistake of expecting the same confidence levels across both types.

Current operational systems — and this is the honest answer — are accurate to roughly one to six hours ahead depending on turbulence type and geographic region. A dispatch briefing six hours before departure can flag a potential moderate turbulence encounter along your routing with reasonable confidence. One hour before pushback, the prediction sharpens considerably. Two hours out, it’s quite good. Four hours ahead and beyond, you use it as one input among several — not as the final word.

Skill varies geographically too. Over the High Plains with consistent wind patterns and solid rawinsonde network coverage, prediction skill runs higher. Over the North Atlantic where radiosonde data is sparse and wind patterns shift rapidly, skill drops noticeably. I’m apparently someone who checks three different tools before a transatlantic crossing, and layering Turbli over the dispatch briefing works for me while relying on either one alone never quite cuts it.

What This Means for Pilots Flying Right Now

So, without further ado, let’s talk about what’s actually available operationally today. This technology has already entered commercial and general aviation. Turbli — a German startup — uses ECMWF model data and machine learning to generate dynamic turbulence maps displayed on iPad apps pilots use for pre-flight planning. FlightAware Foresight integrates turbulence prediction directly into dispatch briefing workflows. Major carriers run internal models feeding their operations control centers data updated every few minutes.

The practical shift is subtle but genuinely important. Your dispatch briefing now reads: “Moderate turbulence possible between flight levels 340 and 360 from ABCDE to FGHIJ between 1400Z and 1600Z, 60% confidence.” Instead of a two-hour-old PIREP saying someone was bumpy at 35,000. The briefing is forward-looking. It’s probabilistic rather than binary. You can actually plan around it.

While you won’t need a dedicated weather office, you will need a handful of reliable tools. Turbli runs on Android and iOS — roughly $50–100 per year depending on subscription tier. Commercial FlightAware subscriptions run $200-plus annually and include dedicated turbulence products. Some airline crew apps include carrier-specific models if your operator has deployed them. First, you should check what your carrier already provides — at least if you fly commercially. None of these tools are free. All of them integrate model output with live satellite and ACARS data streams. The paid subscriptions might be the best option, as meaningful turbulence prediction requires continuous data ingestion and model updates. That is because real-time ACARS and satellite feeds aren’t cheap infrastructure to maintain.

Operationally, the AI prediction doesn’t replace judgment. Forecast winds still drive routing decisions. You still request lower if the ride deteriorates. You still coordinate with center for deviations. What changes is the quality of the question you ask before takeoff.

Where this heads over the next decade: ensemble models will grow larger, incorporating more data sources in closer to real time, with update latency dropping below two minutes from sensor to display. Mobile connectivity improvements will push more computation to the flight deck itself rather than depending on server round-trips. Augmented reality overlays on glass cockpits could eventually display predicted turbulence corridors directly on the navigation map. Still ten to fifteen years from operational reality, probably. But the foundation — data fusion, ensemble methods, sequence learning across time — is already proven and already flying.

Stay in the loop

Get the latest aviate ai updates delivered to your inbox.