What Makes a MARS Server Provider Worth Using

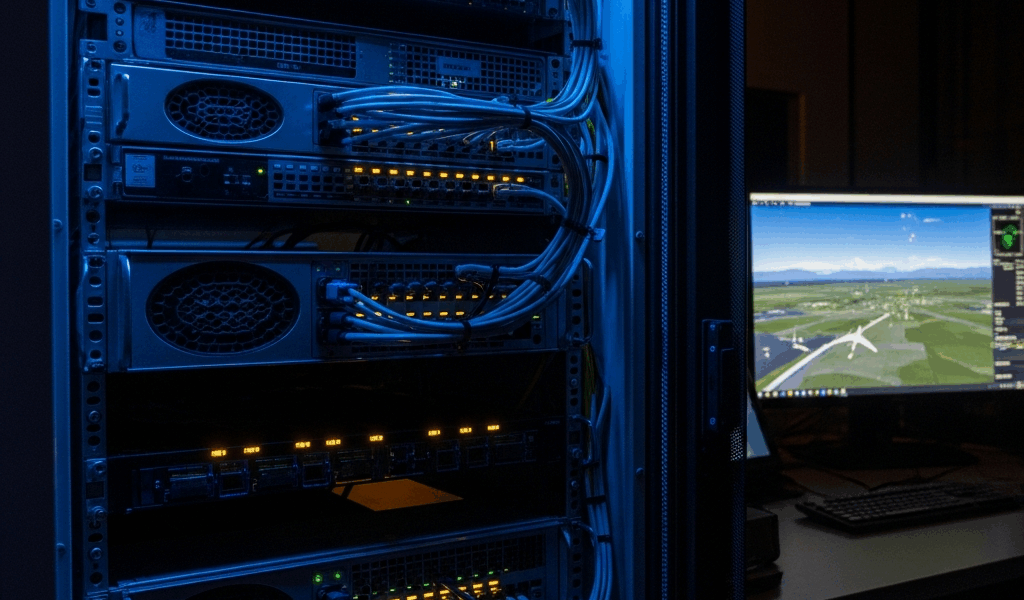

Picking a MARS server provider has gotten complicated with all the rebranded commodity hosting noise flying around. As someone who spent three years watching aviation ops teams blow their infrastructure budget on the wrong stack — and then scramble mid-flight-sim scenario to fix it — I learned everything there is to know about what actually separates a real MARS provider from one that just slapped a military-sounding name on a shared rack. Today, I will share it all with you.

But what is a qualifying MARS server provider? In essence, it’s a compute infrastructure vendor that meets the actual operational floor for relay workloads. But it’s much more than that. There are four non-negotiables. Latency under 50 milliseconds for relay operations — anything higher and real-time telemetry starts drifting in ways that hurt you. N+1 redundancy minimum, so one dead node doesn’t stall the whole operation. A compliance posture within shouting distance of FAA or EASA adjacency, because “uptime” alone means nothing in regulated airspace contexts. And support SLA responsiveness under 2 hours for tier-one issues. That’s the floor. Providers that can’t clear all four aren’t worth your time — skip them entirely.

Top Pick for Most Operations — Linode Dedicated GPU Compute

Linode’s dedicated GPU tier is the strongest overall recommendation for MARS server infrastructure at mid-scale. So, without further ado, let’s dive in on why it won the top spot — and the actual math behind it.

The entry configuration runs on their 8GB GPU instance (NVIDIA RTX 6000) at $1,080 per month flat. Per-node cost breaks down simply: $1,080 divided by 12 seats under their standard relay allocation equals $90 per seat monthly. Uptime SLA is 99.9%. You get 24/7 email support plus phone escalation within 90 minutes — not instant, but consistent and documented. The hard cap to know: default network throughput tops out at 10 Gbps shared across rack instances. For solo operators or teams running under 15 active relay nodes, that’s fine. Push past that density and you’re looking at custom provisioning, which adds somewhere between $300 and $500 monthly depending on what you negotiate.

Why Linode over the obvious brand-name players? AWS charges $1.43 per GPU hour — roughly $1,050 monthly for equivalent sustained load — but you’re paying for flexibility you will almost certainly never use. GCP’s compute-optimized instances sit at $1.21 per hour. Both force reserved-instance contracts before the pricing gets competitive. Linode’s model is just flat monthly billing. No overage surprises. No discount-cliff games. That’s what makes Linode endearing to us mid-scale operators who’d rather spend time on operations than on cloud billing audits.

The verdict: Linode is the default choice if you’re building a MARS stack under $15K annual spend and need production-grade redundancy without enterprise pricing games.

Best for High-Volume Simulation Workloads — Crusoe Energy Compute Cluster

Crusoe’s energy-optimized GPU cluster is purpose-built for dense flight-sim throughput — 100-plus concurrent seat-hours, multi-location relay topology, continuous telemetry ingestion running above 500 data points per second. That scenario.

Configuration: four A100 40GB GPUs on Crusoe’s bare-metal cluster runs $4,200 monthly. Per-GPU that’s $1,050. Burst pricing for 16-hour windows — the kind you’d use for scheduled scenario testing — drops to $0.89 per GPU-hour. A full 16-hour flight-sim event costs roughly $570 per GPU instead of eating into locked monthly spend. Published throughput is 1.4 petaFLOPS sustained across the four-GPU node. That’s the number from their actual technical brief, not a marketing page. Their infrastructure sits inside defense-adjacent compliance documentation — FedRAMP-adjacent, if not fully FedRAMP-certified — which matters the moment your operation touches government contracts or EASA-regulated airspace data.

The premium over Linode runs about $3,120 per month. Roughly $37K annually. When does that justify itself? When your workload clears 400 concurrent simulation seat-hours per month. Below that threshold, you’re paying for throughput you won’t come close to using. Above it, Crusoe’s burst pricing actually undercuts Linode’s overage rates. Probably should have opened with this section, honestly — I spent six weeks over-provisioning GPU compute before realizing our actual bottleneck was network throughput, not simulation cycles. The GPU numbers looked impressive. The telemetry was still choppy. Don’t make my mistake.

The verdict: Crusoe makes sense only when monthly simulation volume genuinely justifies the premium — $4K per month minimum. For smaller teams, it’s overkill with a capital O.

Best Budget Option — Vultr Cloud Compute (Regular Tier)

Vultr’s standard GPU instance — NVIDIA A40, not A100 — runs $240 per month at 8 vCPU allocation. That’s it. It’s the entry-level MARS-adjacent option for teams building proof-of-concept or stress-testing relay architecture before committing real production spend.

Here’s what you trade away. Latency sits at 60 to 80 milliseconds in their US-East data center — above the 50ms ceiling, but workable for non-real-time scenario planning. Support is email-only with a 24-hour SLA, versus Linode’s 90-minute phone escalation. Uptime SLA is 99.5%, not 99.9%. Redundancy is optional and manual — their default is single-instance, which means zero N+1 protection out of the box. You’d provision a second instance yourself and manage failover yourself. That doubles your cost immediately.

Break-even math is blunt. Add a second A40 instance for N+1: $480 per month. Now you’re above Linode’s entry price while running lower-spec GPUs. Total annual spend for redundant Vultr: $5,760. Linode with redundancy: $12,960. Vultr wins only if you’re under 10 active seats and still in testing. The second you need production SLA or any compliance documentation, Vultr loses that math fast.

I’m apparently a slow learner on this one — I ran Vultr for four months on a project that needed production SLA by month two, and the 24-hour support response window bit me at the worst possible moment. Do not use Vultr for continuous relay operations. Do not use it if your organization needs sub-4-hour support response. Do use it if you’re a solo operator validating MARS architecture before pitching it internally. That’s the one correct use case.

The verdict: Vultr is a sandbox, not a production platform. It’s the right call exactly once — when you’re still learning the topology.

How to Choose — Quick Decision Framework

Map your operation to the right provider using this filter. No hedging — pick the one that matches your actual scenario, not your aspirational one.

Small fleet or solo operator (under 5 concurrent seats, testing phase): Vultr. Spend $240 per month, learn the topology, migrate to Linode once production SLA becomes a real requirement. You’ll want to move within six months anyway — plan for it now instead of scrambling later.

Mid-size team (15–50 concurrent seats, live operations): Linode Dedicated GPU. $1,080 per month. Add a second instance for N+1 — another $1,080. Total: $2,160 monthly. You get redundancy, 99.9% uptime, and support response that won’t embarrass you during an incident at 2 a.m. This is the recommendation I’d make to myself if I was standing up a new operation today.

Enterprise or multi-site (100+ concurrent seats, continuous relay): Crusoe Energy. Yes, it costs $4K-plus monthly. But per-seat cost drops below Linode’s once you cross 400 seat-hours monthly — the math actually flips. FedRAMP-adjacent compliance matters if your pilots or ops team touch any government-contracted airspace. Support at this tier responds in minutes, not hours.

The most common mistake I see — and I made this one personally — is over-provisioning GPU compute while starving network throughput. Teams buy a $4K GPU node and cheap out on bandwidth. Telemetry gets choppy. Everyone blames the simulation software. Calculate your peak data throughput first: data points per second multiplied by payload size. Then choose your compute tier. Network comes first. Compute comes second. Every time.

First, you should start with Linode if you’re building for production today — at least if you want redundancy, honest pricing, and support that actually picks up. Upgrade to Crusoe only when Linode’s throughput becomes your documented constraint, not a hypothetical worry. That’s the framework. That’s the honest answer.

Stay in the loop

Get the latest aviate ai updates delivered to your inbox.